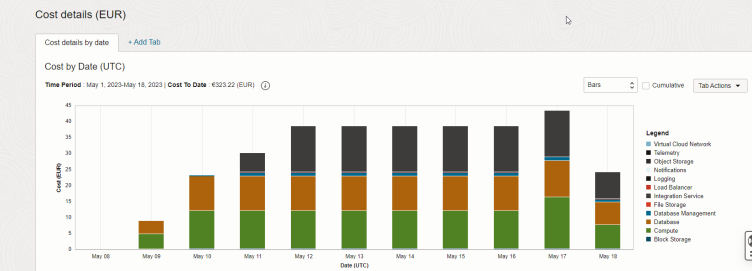

Turn off all the lights at night - Reducing costs by automatically pausing EBS@OCI instances

Costs Analysis dashboard in OCI

Running services on a public cloud platform, even when running the service 24/7, is often cheaper than running these services on premise regarding Total Cost of Ownership (TCO). Especially for development and testing instances, this cost advantages get even better when taking into consideration the following aspects:

- The number of environments required is often volatile: There are phases when you need many environments with high performance (e.g. during a UAT of a major "new feature" or an "upgrade" implementation), but then there are many other months where a single "small" testing instance to troubleshoot issues might be enough.

- Even during peak periods, such instances are often not needed 24/7 but only during usual business hours.

When running Oracle E-Business Suite on Oracle Cloud Infrastructure (OCI), this advantage is even more significant than with other cloud vendors: OCI allows to easily "rent" licenses for the database and application service required for E-Business Suite in a PaaS model "by the hour". This means: When reducing the average number of CPU cores used throughout the year (by one or both methods shown above), the cost savings can be very significant.

Let's have a look into the second aspect and see how we can easily shutdown E-Business Suite instances on OCI during night or weekend times.

Clean E-Business Suite shutdown

First of all, to automate pausing instances (and billing) during nighttime, you first have to cleanly shut down the E-Business Suite application server and middle tier. At least until today, there is no way of really "pausing" /"freezing" an instance without shutting down everything. Even though this is on the OCI roadmap, we will need to see if a complex environment such as an E-Business Suite will survive such a hibernate. I suggest the following stopEBS.sh script. You will obviously replace APPS_PWD and WEBLOGIC_PWD with your values:

source /u01/install/APPS/EBSapps.env run adstpall.sh -mode=allnodes apps/APPS_PWD << EOF WEBLOGIC_PWD EOF sh /home/oracle/stop_apex122.sh echo -n "Waiting for Concurrent Manager to go down" while true; do $FND_TOP/bin/FNDSVCRG STATUS > /tmp/icmstatus2.txt cat /tmp/icmstatus2.txt if test `cat /tmp/icmstatus2.txt | grep "Internal Concurrent Manager is Active" | wc -l` -eq 0 ; then echo echo -n "Concurrent Manager is down now"; break; fi sleep 10; done ps -fu oracle sleep 60 ps -fu oracle

Stopping of the OCI infrastructure (and billing)

With that, you can initiate the shutdown of the VMs (in this case with Base Database on VM and a single Apps Tier on Compute) using a script stopInstance.sh to which you need to pass the environment name:

COMPARTMENT_ID=ocid1.compartment.oc1..XXXXXXX

CONFIG_FILE=/u01/install/APPS/.oci/johannes.michler@promatis.de

ENV_NAME=$1

echo "Instance Name:"$ENV_NAME

HOST_APPS=${1,,}app01

IP_APPS=`dig +short ${HOST_APPS}.appssubnet.ebsnetwork.oraclevcn.com`

echo "IP Address Apps:"$IP_APPS

HOST_DB=${1,,}db

IP_DB=`dig +short ${HOST_DB}.dbsubnet.ebsnetwork.oraclevcn.com`

echo "IP Address DB:"$IP_DB

OCID_APPS=$(oci compute instance list --compartment-id $COMPARTMENT_ID --query "data [?\"display-name\" == '${ENV_NAME}app01'].id|join(',',@)" --config-file $CONFIG_FILE | tr -d '\"')

echo "OCID-APPS:"$OCID_APPS

OCID_DB_SYS=$(oci db system list --compartment-id $COMPARTMENT_ID --config-file $CONFIG_FILE --query "data [?\"display-name\" == '${ENV_NAME}'].id|join(',',@)"| tr -d '\"')

echo "OCI-DB-Sys:"$OCID_DB_SYS

OCID_DB_NODE=$(oci db node list --db-system-id $OCID_DB_SYS --config-file $CONFIG_FILE --compartment-id $COMPARTMENT_ID --query "data[].id|join(',',@)"| tr -d '\"')

echo "OCI-DB-Node:"$OCID_DB_NODE

echo Stopping apps tier

ssh $IP_APPS "./stopEBS.sh"

echo Stopping VM-DB

ssh $IP_DB "srvctl stop database -d \$ORACLE_UNQNAME -stopoption IMMEDIATE"

oci db node stop --config-file $CONFIG_FILE --db-node-id $OCID_DB_NODE

echo Now stopping Apps

oci --config-file $CONFIG_FILE compute instance action --action STOP --instance-id $OCID_APPS

The script makes use of the OCI Command Line Interface (CLI) to stop the database and the compute instance(s).

If you have the database on compute, you can use commands like the ones to stop the compute instance for the apps tier.

Keep in mind that you might need to disable advanced monitoring as well to stop billing of that OCI Database Management.

Bringing everything up again

Starting everything again works similar: I'm using a startInstance.sh script as follows:

echo "starting shutdown"

COMPARTMENT_ID=ocid1.compartment.oc1..XXXXX

CONFIG_FILE=/u01/install/APPS/.oci/johannes.michler@promatis.de

ENV_NAME=$1

echo "Instance Name:"$ENV_NAME

HOST_APPS=${1,,}app01

IP_APPS=`dig +short ${HOST_APPS}.appssubnet.ebsnetwork.oraclevcn.com`

echo "IP Address Apps:"$IP_APPS

HOST_DB=${1,,}db

IP_DB=`dig +short ${HOST_DB}.dbsubnet.ebsnetwork.oraclevcn.com`

echo "IP Address DB:"$IP_DB

OCID_APPS=$(oci compute instance list --compartment-id $COMPARTMENT_ID --query "data [?\"display-name\" == '${ENV_NAME}app01'].id|join(',',@)" --config-file $CONFIG_FILE | tr -d '\"')

echo "OCID-APPS:"$OCID_APPS

OCID_DB_SYS=$(oci db system list --compartment-id $COMPARTMENT_ID --config-file $CONFIG_FILE --query "data [?\"display-name\" == '${ENV_NAME}'].id|join(',',@)"| tr -d '\"')

echo "OCI-DB-Sys:"$OCID_DB_SYS

OCID_DB_NODE=$(oci db node list --db-system-id $OCID_DB_SYS --config-file $CONFIG_FILE --compartment-id $COMPARTMENT_ID --query "data[].id|join(',',@)"| tr -d '\"')

echo "OCI-DB-Node:"$OCID_DB_NODE

echo Starting DB

oci db node start --config-file $CONFIG_FILE --db-node-id $OCID_DB_NODE --wait-for-state AVAILABLE

echo Now Starting Apps

oci --config-file $CONFIG_FILE compute instance action --action START --instance-id $OCID_APPS --wait-for-state RUNNING &

wait

echo "started DB and Apps"

sleep 30

until ssh $IP_DB "echo da" 2> /dev/null

do

echo "not ready, waiting 5"

sleep 5

done

echo "sleeping another 30, dann start"

sleep 30

until ssh $IP_DB "srvctl start database -d \$ORACLE_UNQNAME"

do

echo "not ready, waiting 5"

sleep 5

done

until ssh $IP_APPS "echo da" 2> /dev/null

do

echo "not ready, waiting 5"

sleep 5

done

echo "sleeping another 30, dann start"

sleep 30

echo "Just in case the mountpoint is not yet there"

ssh opc@$IP_APPS sudo mount /u01

ssh $IP_APPS ./startEBS.sh

More fine-grained scaling - even without downtimes and maybe on PROD

Of course, the above approach can be down in less harsh manner. Instead of stopping the entire environment, you could, for example, simply stop some of the application tiers during nighttime; e.g. if you need 6 oacore servers to handle the daily work, but maybe only one during the night, then you can shut down five servers every night. And since in this way, the system stays available, you could even do so on production. OCI has dynamic scaling of CPU and memory on the roadmap, so maybe this gives even more flexibility in the future.

Summary

Using the above scripts and some crontab entries (e.g. on the E-Business Suite Cloud Manager machine) you can easily stop most of the costs for E-Business Suite Dev and Test Instances if they're not needed.

There are many reasons to run E-Business Suite on OCI as I've shown in previous blog posts. By dynamically scaling down the infrastructure during low or no-use periods costs can be dropped significantly! If you're interested to try it out, maybe look at the Free Trial for OCI and the E-Business Suite on OCI Hands On Lab - see my previous posts on things to consider while doing so with brand new tenancies.